Before you keep scrolling… pause for a second

Be honest—how many times have you:

- Liked something without really reading it

- Shared something because it “felt right.”

- Seen the same post so many times that it just seemed true

This is not random. This is not just “people being dumb.”

This is how social media is designed.

And once you see it, you cannot unsee it… Trust!

This post is designed for college students and young adults who regularly use platforms like TikTok, Instagram, and X.

What is actually happening to you online

Social media platforms are not neutral. They are powered by algorithms optimized for engagement, meaning their job is to keep you scrolling, liking, and interacting as long as possible! One important step is to investigate the source of what you are seeing, rather than accepting it at face value.

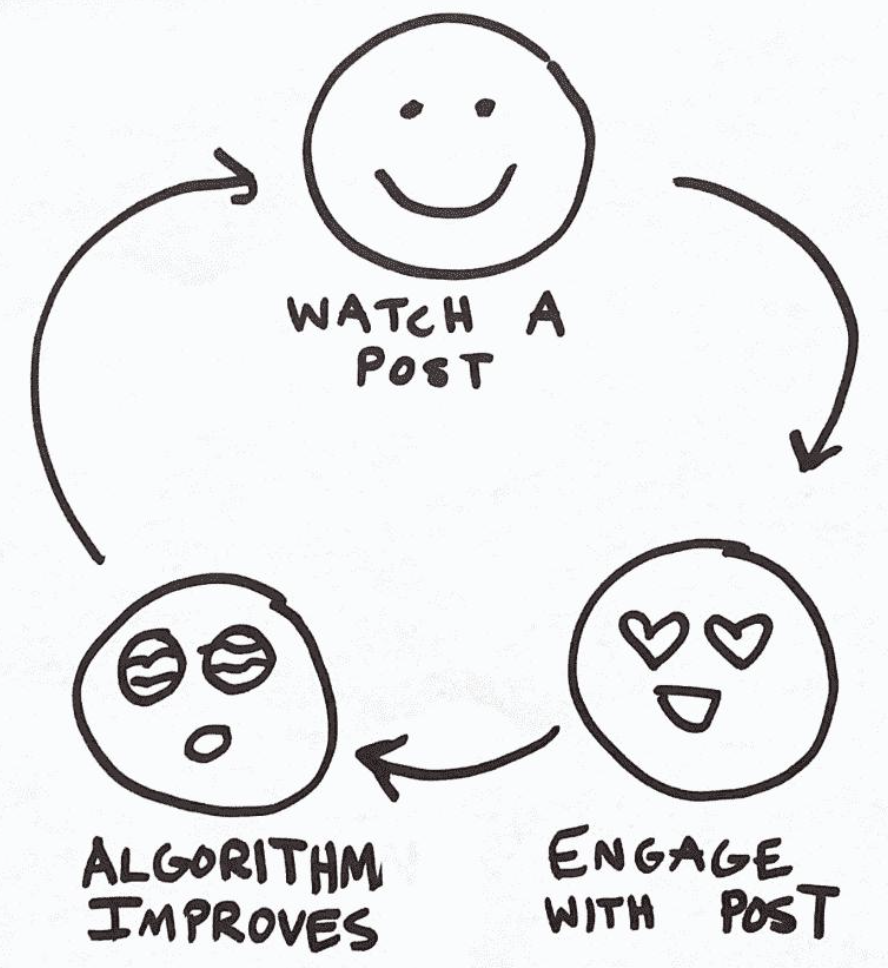

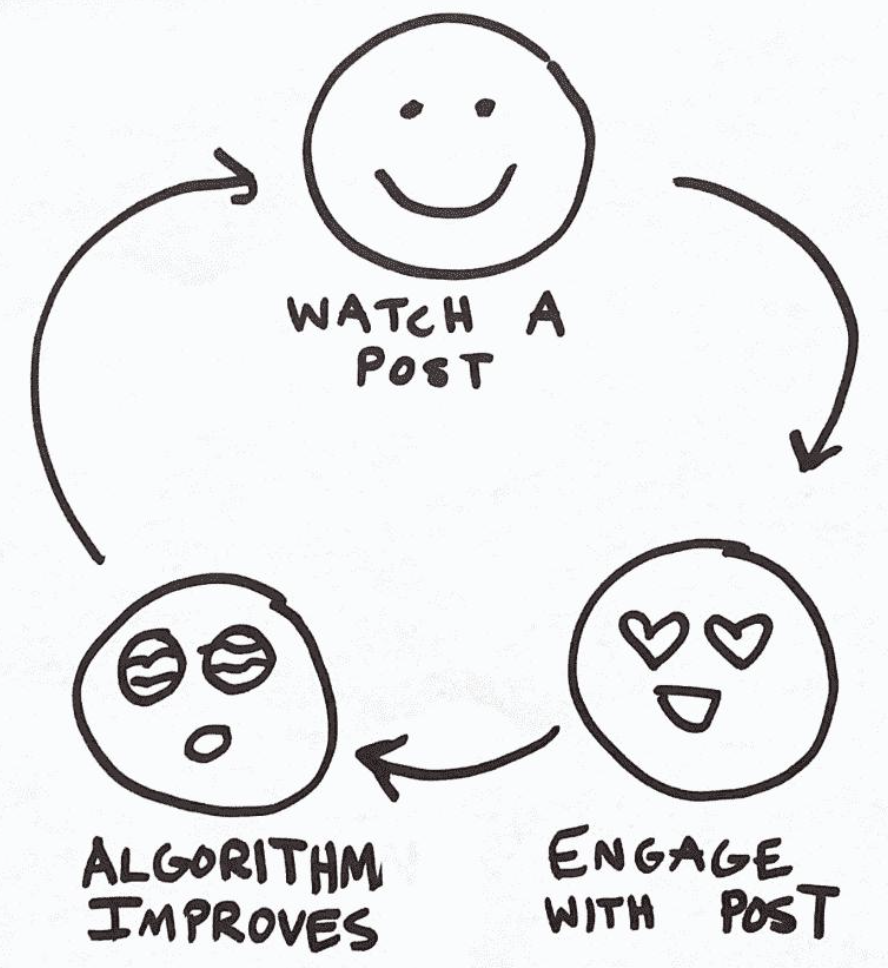

The more you interact with something, the more you see it.

Over time, this creates a feedback loop where:

- You see similar ideas

- Those ideas feel familiar

- Familiar starts to feel true

This is where misinformation starts to take hold—not because you are careless, but because the system is working exactly as designed. In this course, we learned that digital platforms are not neutral spaces—they are designed systems that shape how information is distributed, repeated, and interpreted by users. This means what you see online is not a random reflection of reality—it is a curated experience.

This is not just a theory. Internal research from companies like Meta has shown that these platforms are aware of how their systems affect users. In fact, internal findings revealed that Instagram could negatively impact mental health for some teenage users, showing how engagement-driven design can have real psychological effects.

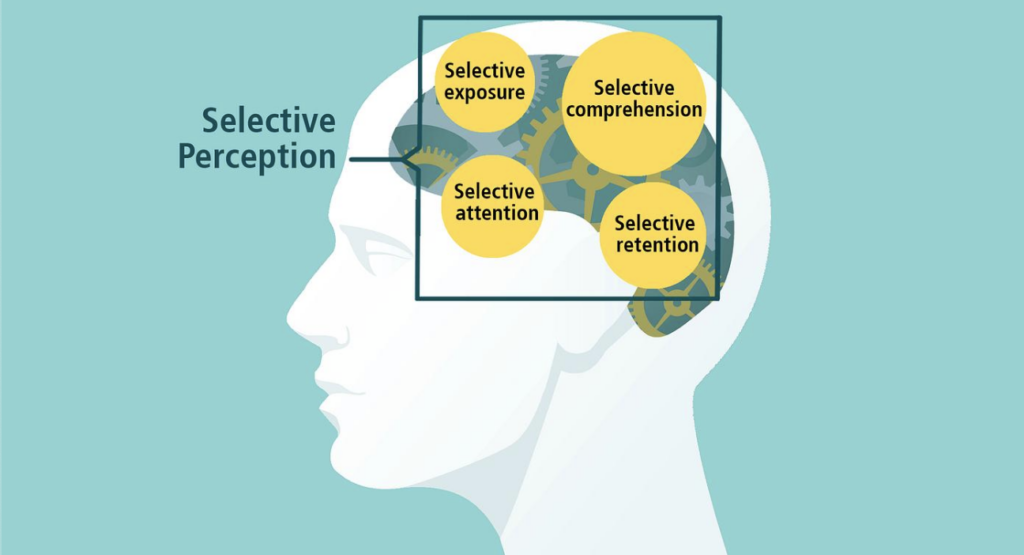

Your brain is wired for this: Confirmation Bias

One of the biggest reasons misinformation spreads is something called confirmation bias. In our course, this is understood as a cognitive bias—one of the mental shortcuts (heuristics) people use to process information quickly, especially in fast-paced digital environments.

This means you are more likely to:

- Believe information that matches what you already think

- Ignore or question information that challenges you

On social media, this shows up constantly. This is amplified online because platforms reward engagement, not accuracy.

If you already believe something—even slightly—you are more likely to:

- Like posts that agree with it

- Watch those videos longer

- Engage with similar content

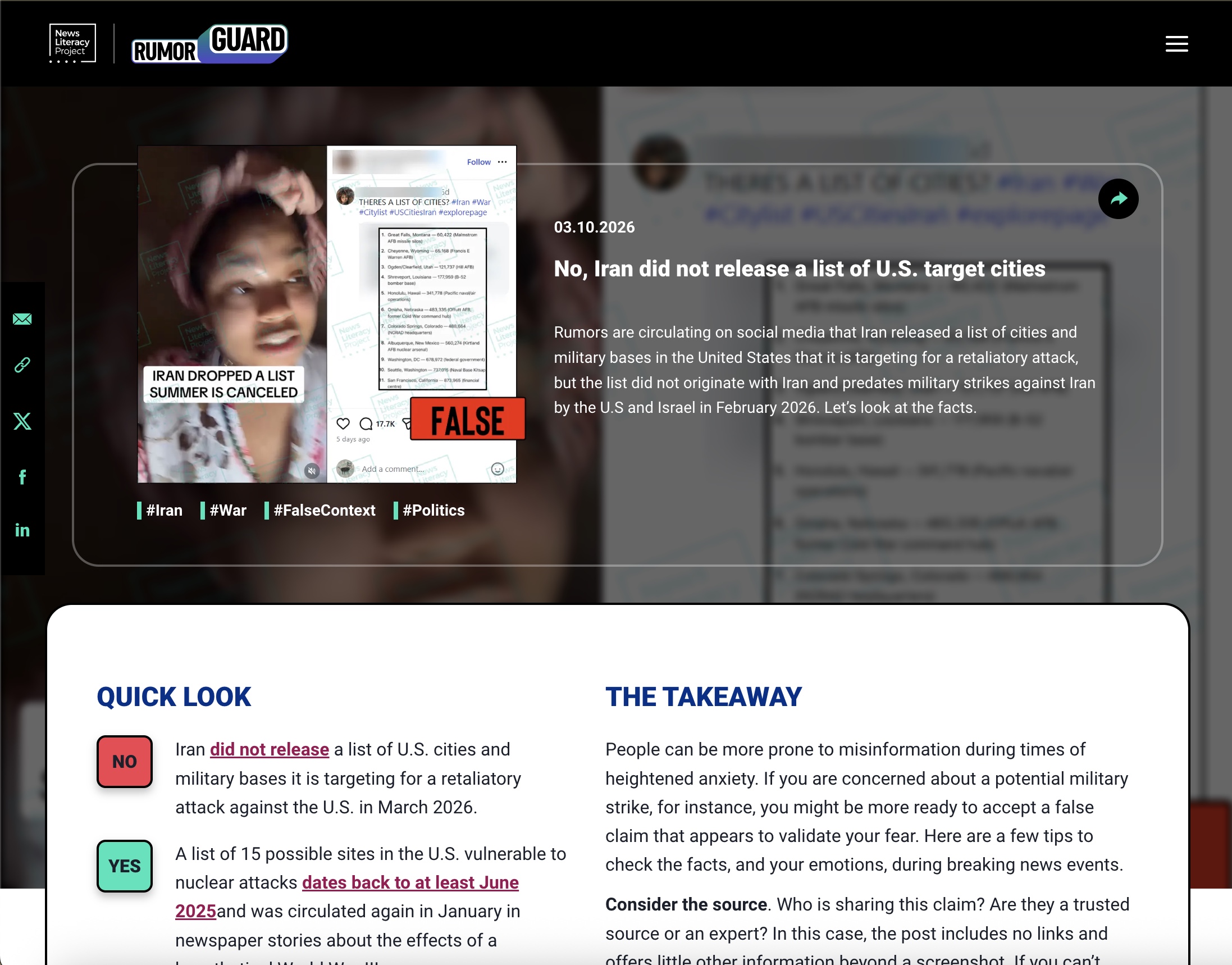

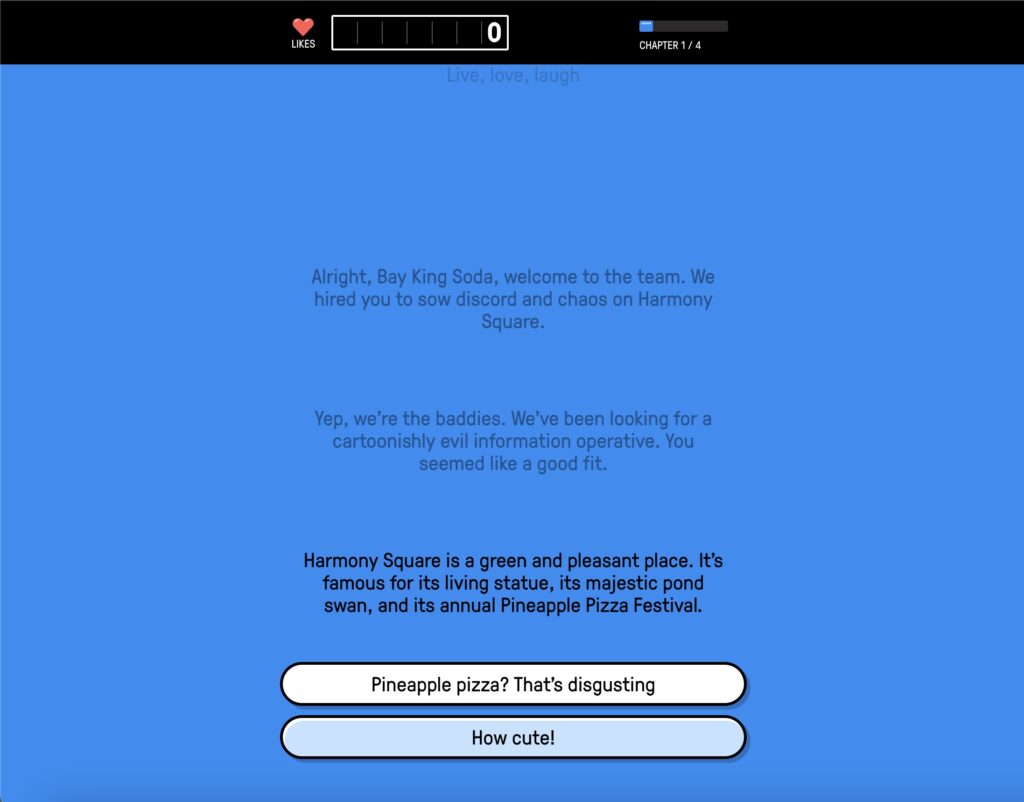

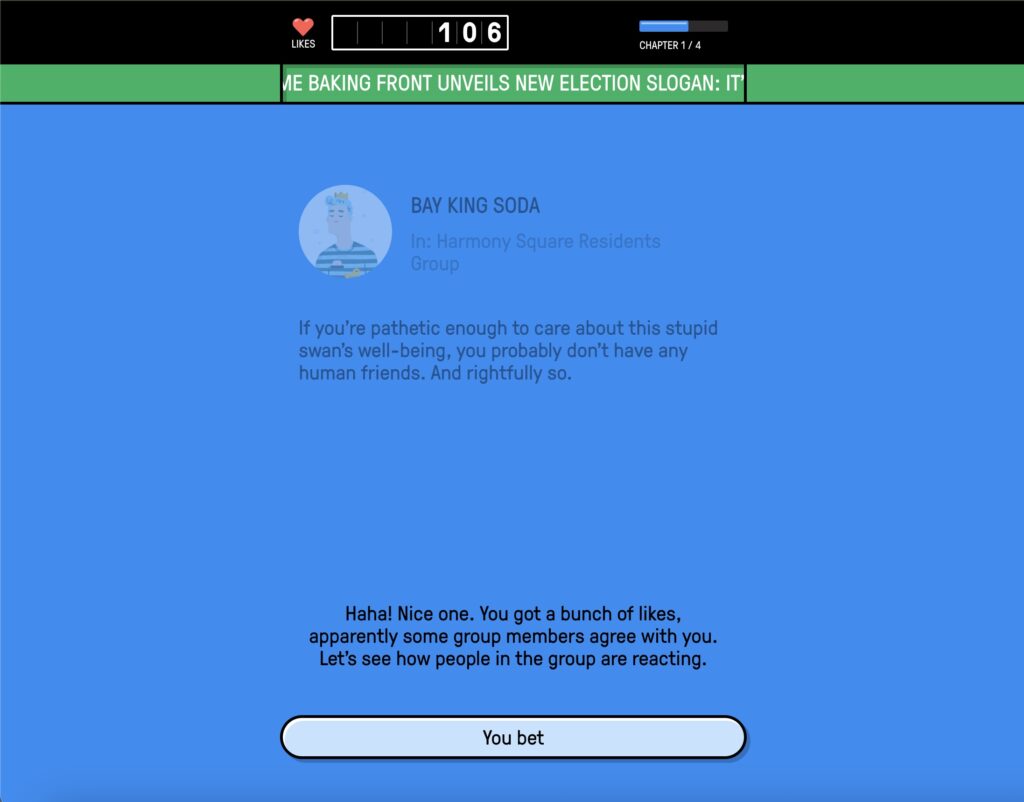

- IMAGE Number 2: This visualization illustrates cognitive processing through mental shortcuts, where confirmation bias influences how individuals interpret and reinforce information that aligns with existing beliefs.

And guess what happens next?

The platform gives you more of it.

So it starts to feel like:

“Everyone is saying this… so it must be true.”

But really, you are just seeing more of what you already leaned toward.

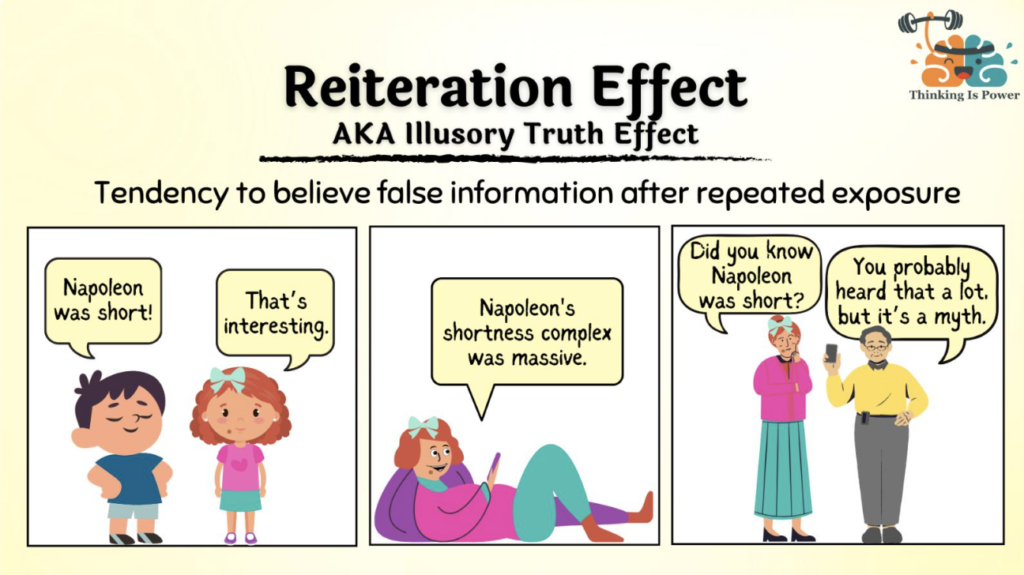

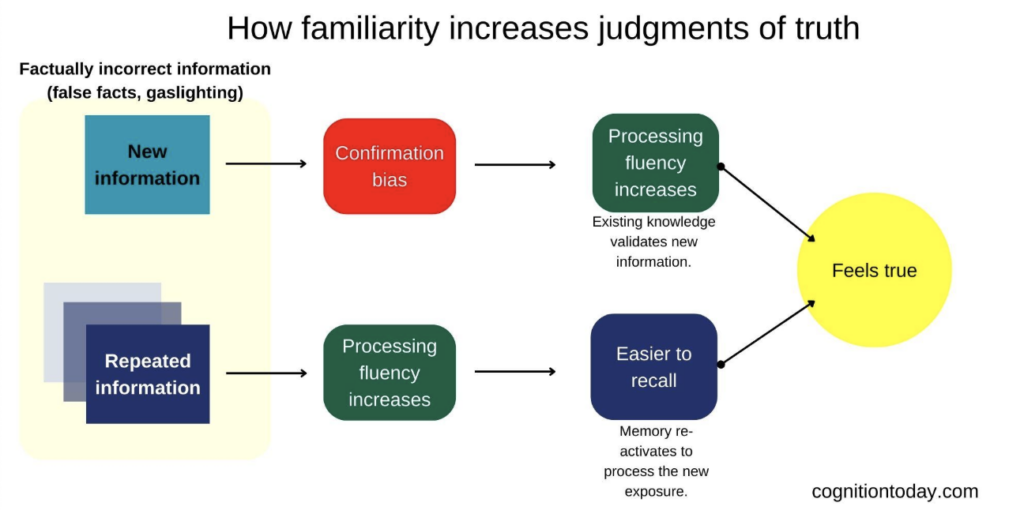

Repetition = Truth (even when it is not)

This is called the illusory truth effect. In our course, this concept is explained as a cognitive processing effect, where repeated exposure increases familiarity, and familiarity is often mistaken for truth.

Here is what that means in simple terms:

The more times you are exposed to something, the more true it feels.

Not because it is accurate—but truly because it is familiar.

Think about viral posts, trending sounds, or repeated claims.

Even if you were unsure at first, after seeing it:

- Once

- Twice

- Ten times

It starts to feel normal. Expected. True.

For example, a widely shared image claimed that The New York Times supported bullying unvaccinated children—but fact-checking revealed the image was digitally altered and not real.

This is why misinformation spreads so fast—it does not need to be correct, it just needs to be repeated.

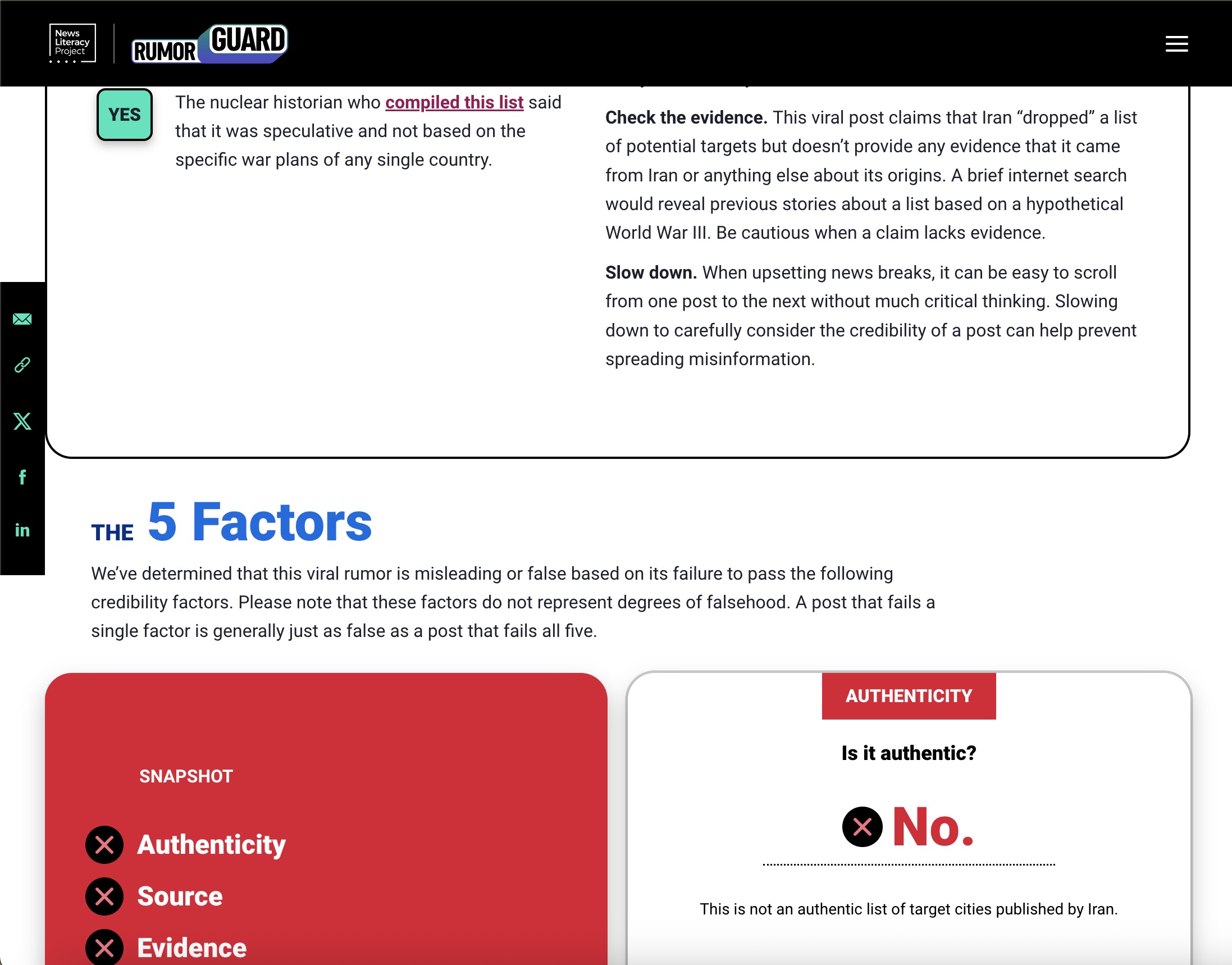

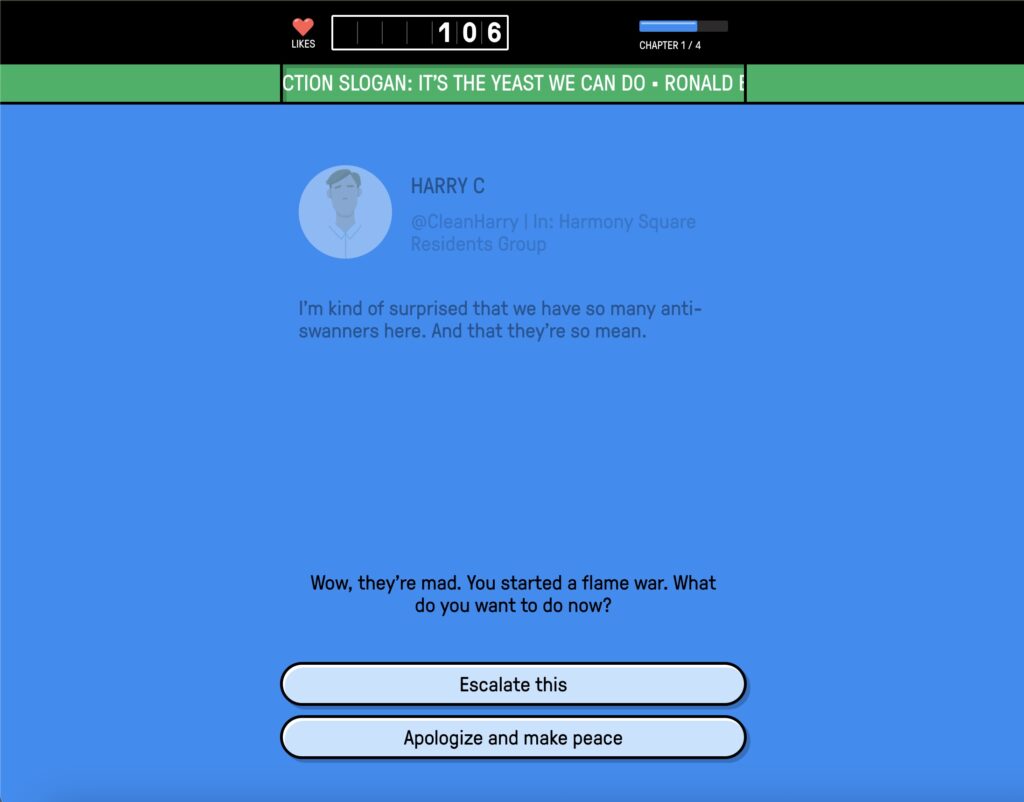

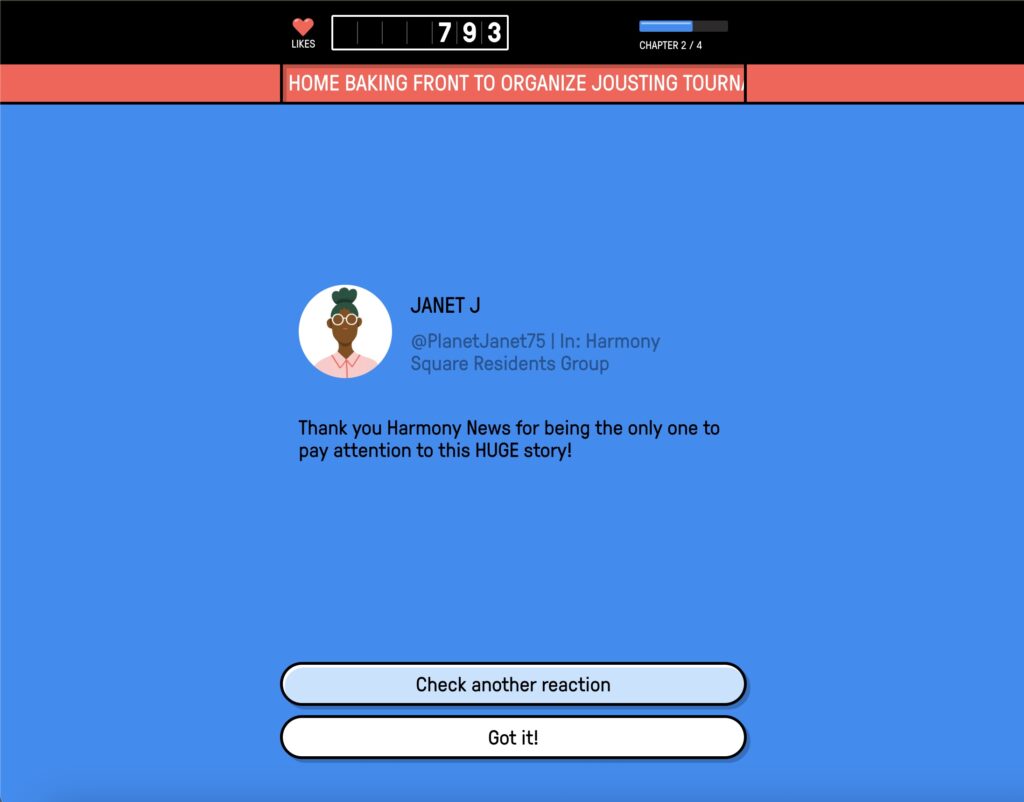

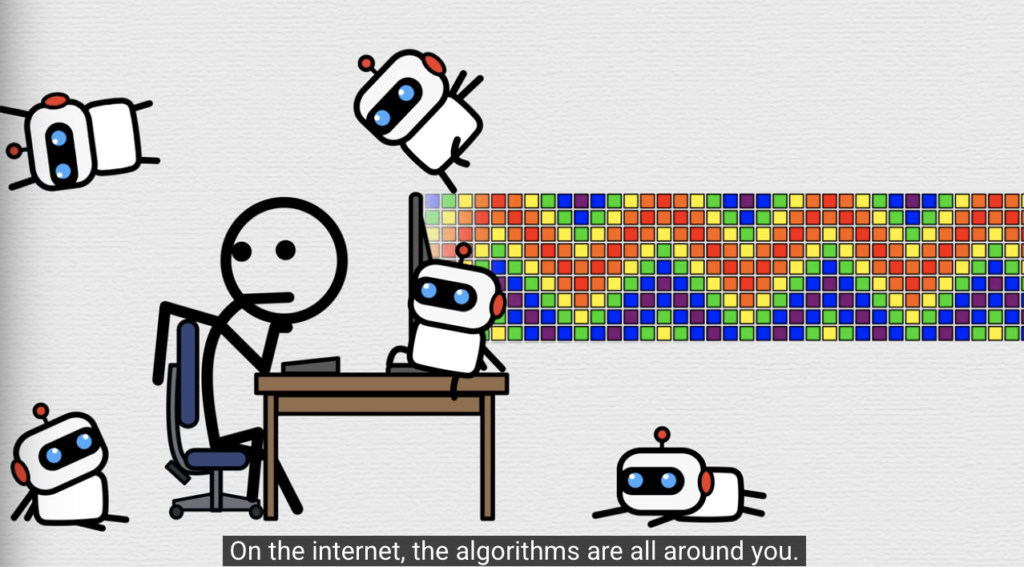

- IMAGES 3 & 4 Below Express: Both of these show the same idea—when we see something over and over, it starts to feel true, even if it is not. That is the illusory truth effect.

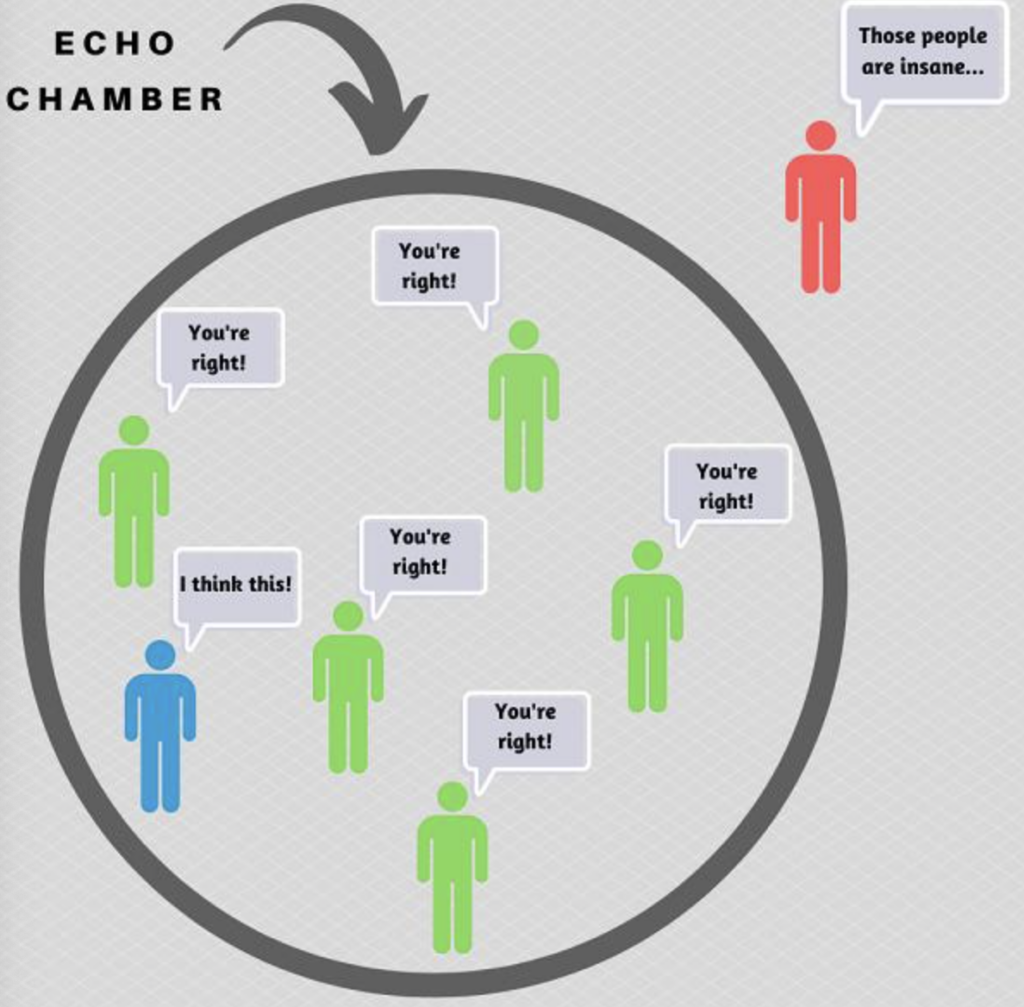

You are not seeing “everything” — you are in an Echo Chamber

Another major factor is something called echo chambers.

This happens when you are mostly exposed to:

- People who think like you

- Content that supports your views

- Ideas that are not challenged

Because of algorithmic feeds, your social media is personalized to you.

Research shows that people do not receive information from a single source, but through multiple overlapping “curated flows,” where algorithms, social networks, and personal choices all shape what content is seen.

That means:

- Two people can search the same topic

- See completely different “realities.”

Inside an echo chamber:

- Your beliefs are constantly reinforced

- Opposing views are filtered out

- Misinformation becomes harder to recognize

It does not feel like bias. It feels like the truth.

So what is the actual problem?

The issue is not just misinformation itself.

The issue is how:

- Confirmation Bias

- Illusory Truth Effect

- Echo Chambers

- Algorithmic Feeds

ALL work together!!!

Research from our course materials supports this. A large-scale study found that even small changes in what people are exposed to online can influence what they continue to engage with over time. As exposure increases, people are more likely to seek out similar content, reinforcing their existing beliefs and shaping how they interpret information.

This YouTube video shows how algorithms learn from your behavior and continuously recommend similar content, reinforcing what you see and making certain ideas feel more true over time.

This creates a system where:

- False information spreads easily

- Repeated ideas feel true

- Your beliefs get reinforced without you realizing it

And the most important part?

You think you are thinking for yourself.

These systems are designed to prioritize content that keeps people engaged, even if that content is misleading or emotionally charged. This means that what spreads the fastest is not always what is most accurate—but what gets the strongest reaction.

Pause and check yourself (seriously)

Take a second and ask yourself:

- When was the last time I checked a source before sharing?

- Do I ever see content that challenges my beliefs?

- Am I liking things because they are true—or because they feel right?

This is where awareness starts.

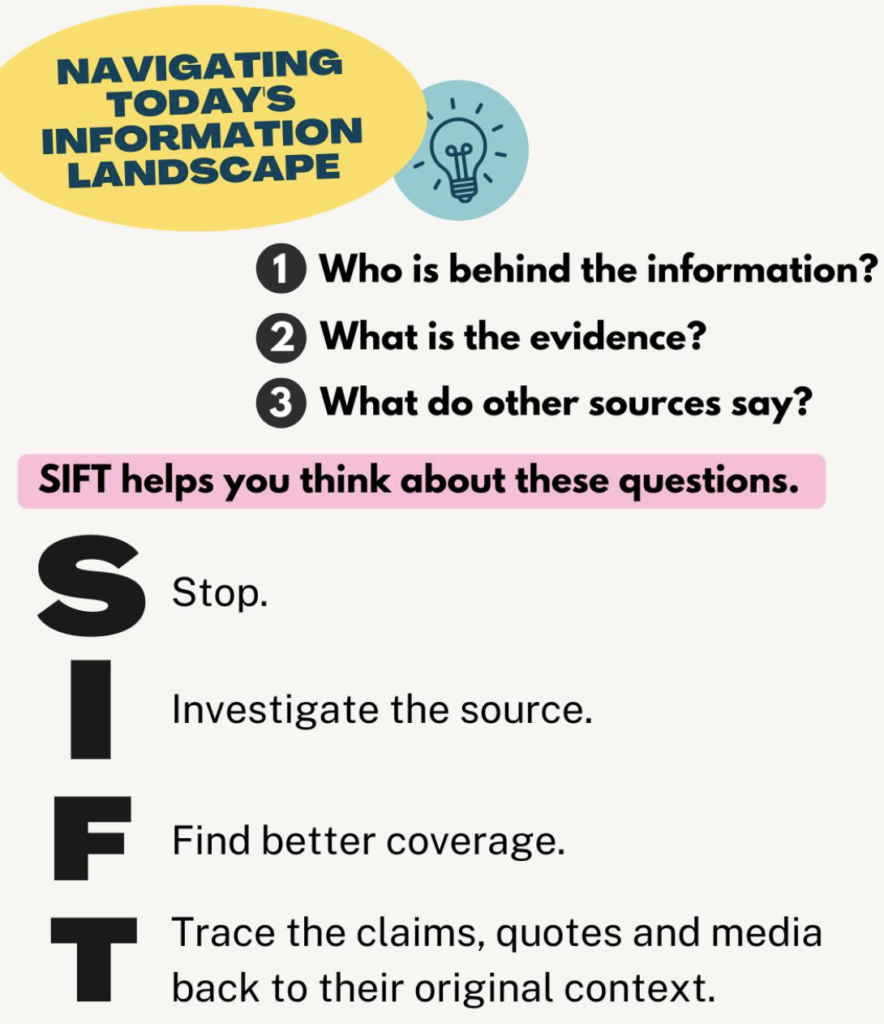

This approach is supported by strategies such as the SIFT method, which emphasizes pausing to verify sources before accepting or sharing information.

What can you actually do about it

You do not need to become a fact-checking expert.

You just need to SLOW DOWN your automatic reactions.

Try this:

- Pause before liking or sharing

- Ask “Where did this come from?”

- Use strategies like the SIFT method to quickly evaluate information

- Be aware that what you see is curated—not neutral

Even small changes CAN break the cycle.

Once you see it… It changes everything

Misinformation is powerful not because people are unintelligent, but because it is built on how humans naturally think.

Social media just amplifies it.

Now you know:

- Why things feel true

- Why do you keep seeing the same ideas

- How does your own behavior play a role

And that awareness alone puts you ahead of most people scrolling right now.

What This Helps You Do

After reading this, you should be able to:

- Recognize when familiarity is influencing what feels true

- Notice how algorithms are shaping what you see

- Pause before engaging with content automatically

- Apply simple strategies like the SIFT method to evaluate information

About This Project

- Target Audience:

Young adults and college students who actively use platforms like TikTok, Instagram, and X, and regularly engage with content through scrolling, liking, and sharing. - Geographic Scope:

While misinformation is a global issue, this project focuses on social media use in the United States, where algorithm-driven platforms heavily shape information exposure. - Why This Format:

A blog-style format was chosen because it allows complex ideas to be broken into smaller, easy-to-follow sections using visuals and real-world examples. This mirrors how the target audience already consumes content, making the message more engaging and easier to understand. - Purpose:

This project is designed to help readers understand how misinformation works on both a psychological and algorithmic level, so they can become more aware of their own behavior online.

Sources / Learn More

Course Materials (ASU)

These modules build the core concepts used throughout this post:

- Module 4: Evaluating Sources & Digital Literacy (SIFT Method, verification skills)

https://canvas.asu.edu/courses/241400/pages/module-4-learning-materials - Module 5: Misinformation, Algorithms, and Media Systems

https://canvas.asu.edu/courses/241400/pages/module-5-learning-materials - Module 7: Digital Media, Influence, and Information Environments

https://canvas.asu.edu/courses/241400/pages/module-7-learning-materials

Research & Real-World Sources

These sources provide real-world evidence of how misinformation spreads and how platforms shape what you see:

- How algorithms shape what you see online

https://www.wsj.com/tech/personal-tech/facebook-knows-instagram-is-toxic-for-teen-girls-company-documents-show-11631620739 - How social media platforms handle misinformation

https://www.nytimes.com/2023/02/14/technology/disinformation-moderation-social-media.html - Why platforms should not be trusted to shape information flow

https://blogs.lse.ac.uk/medialse/2021/10/12/its-time-to-stop-trusting-facebook-to-engineer-our-social-world/ - Research on how online media exposure shapes beliefs

https://www.pnas.org/doi/pdf/10.1073/pnas.2013464118 - Example of fact-checking misinformation in practice

https://www.reuters.com/article/factcheck-new-york-times-altered-article/fact-check-image-purporting-to-show-new-york-times-opinion-piece-calling-for-teachers-to-tolerate-bullying-towards-unvaccinated-children-is-digitally-altered-idUSL1N2T81UK/ - How students can evaluate information like fact-checkers

https://www.chronicle.com/article/students-fall-for-misinformation-online-is-teaching-them-to-read-like-fact-checkers-the-solution/

The goal is not to stop using social media—it is to stop letting it think for you.

You do not need to be perfect online.

You just need to be more intentional.

Pause.

Question what you see.

And remember—what feels true is not always what is true.